Emerson’s Lou Heavner, a consultant on the Advanced Automation Services team, recently co-authored a paper, Using Neural Network Technology for Virtual Sensing in Crude Refining Units, at the recent ISA EXPO 2006. Lou worked with engineers from Russian oil refiner, Lukoil.

Always a great presenter, Lou not only shared his expertise on this project, but the fact he homebrews beer. I imagine this keeps his control application skills honed!

The paper describes the need to improve quality of refined products to improve upon the current process of taking lab samples once or twice a day. The operators would make changes to the process based upon the lab readings. They tried to control key temperatures and other process variables on the pre-flash, atmospheric, and vacuum columns by manipulating flows including reflux, furnace fuel, pump-arounds and product draws.

To make these quality adjustments more in real-time, the refinery engineers want to use something other than costly and maintenance-prone on-line analyzers. They decided to use neural network technology to build real-time inferential property estimators which could run inside the refinery’s existing DeltaV controllers.

Lou work with the Lukoil engineers to build ten artificial neural networks measuring gasoline, kerosene, diesel, VGO, and residue on the pre-flash, atmospheric, and vacuum towers. They believe this application of virtual sensors to be one of the world’s largest on a single crude unit.

The real work comes in collecting the data needed to train the neural networks. They needed around 100 lab samples for each model and the continuous historical data for process variables over this sample period. The DeltaV Neural software helped automatically perform the data collection and model training need to build and prove the neural networks. Up to 20 process variables were collected as inputs in training each of the ten neural networks. Any abnormal operating conditions were identifies to exclude the data from this time period from the model. Any of the variables that had little or no effect on the model outputs were eliminated.

The real work comes in collecting the data needed to train the neural networks. They needed around 100 lab samples for each model and the continuous historical data for process variables over this sample period. The DeltaV Neural software helped automatically perform the data collection and model training need to build and prove the neural networks. Up to 20 process variables were collected as inputs in training each of the ten neural networks. Any abnormal operating conditions were identifies to exclude the data from this time period from the model. Any of the variables that had little or no effect on the model outputs were eliminated.

The largest challenge in the data collection effort was in the lab data. It had to be accurate in terms of precise time of taken sample and the proper analysis of the sample. The quality of the neural networks is directly impacted by the accuracy of the samples. Another important factor is to make sure the process data is not filtered or manipulated, but instead a raw snapshot.

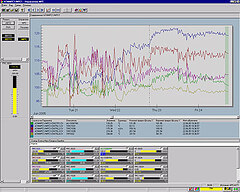

The resulting inferential sensors predicted what the lab results showed within a few degrees. The ones estimating lighter refined products were more accurate. The engineers have not closed the loop to run the control strategies based on these readings, but they do present the information to the operators to make adjustments more frequently then they could with samples coming only once or twice a day.