Over the past several years, I’ve covered the advancements in batch analytics to improve the efficiency of batch processes and spot troubles early enough to avoid or resolve the trouble. There can be many simultaneous complex activities in batch operations including process holdups, lab data access, feedstock variations, unsteady operations, and concurrently running batches that can provide challenges to operators running these processes.

The goal of online batch analytics is to provide early detection before a negative impact on production occurs and to predict the end-of-batch quality parameters. The earlier an abnormal condition is spotted, the better the chance for the operators to get the batch back on track. This means decreased costs, reduced cycle times, increased yield, reduced waste, reduced variability, and improved reliability. In other words, earlier problem detection means a lot.

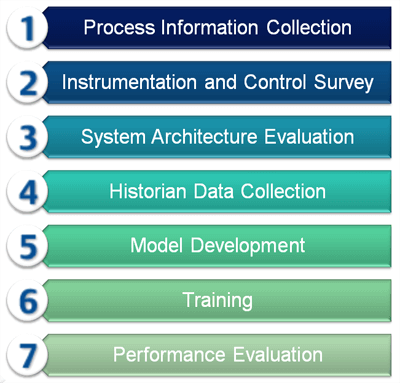

I caught up with Emerson’s David Rehbein, a Life Sciences Senior Industry Consultant, who shared a process with me that process manufacturers should follow to prepare to implement batch analytics into their production process. As the technology is further refined, steps can be taken today to prepare your plant. It begins with the process of collecting information.

Dave notes that forming a cross-functional team is an important part of the process information collection first step. The team would consist of members from operations, quality, information technology, and controls and automation. This team must have a good understanding of the process, batch control strategies, and the final products produced. By bringing in the various perspectives and expertise, the potential processes where batch analytics can help improve operations can be identified.Next, an instrumentation and control survey should be performed. Without consistent measurement and final control, batch-to-batch repeatability is difficult to achieve. Once the measurement devices and final control elements are performing as they should be, the control loops and advanced control strategies should be tuned to achieve optimum performance. Without performing this important step, the data collected by the historian may be suspect. In an earlier post, Guidance for Good Dynamic Control Loop Performance, I highlighted where to spot issues preventing optimum loop performance.

Dave described a system architecture evaluation as the next preparatory step. Emerson consultants work with the cross-functional team to evaluate the current automation architecture to identify the relevant data required to characterize the batch process to be modeled. By studying the material flow, an audit can be performed make sure all the data required is accessible.

Dave has led several process manufacturers through these preparatory steps before the process of historical data collection and model development begins. In a post, Applying Batch Analytics to Fermentation Processes, I describe the historical data collection and model development processes.

Training and performance evaluation are the two final steps. Training is critical to make sure the models continue to operate and are maintained over time and to give the operations staff insights into the key areas of focus. Performance evaluation is important to understand the benefits of the investment and to look for additional opportunities where batch analytics can be applied.